AI Platform Strategy: Beyond the Wrapper and Avoiding the "Innovation Lag"

The AI landscape is moving at breakneck speed. It feels like every week there’s a new model, a new framework, or a "killer" feature that promises to change everything. But for many organizations, the initial excitement of building a quick LLM Wrapper is starting to fade, replaced by a more complex reality: how do we build an AI platform that actually scales and provides long-term value?

I recently gave a talk in Perth on this exact topic, and I wanted to share the core strategy we use at Cohezion to help our clients move from "AI experiments" to robust Internal AI Platforms.

The Wrapper Trap: Why You Might Be "Sinking"

A common starting point is the LLM Wrapper—a simple interface that connects your users to an external LLM API.

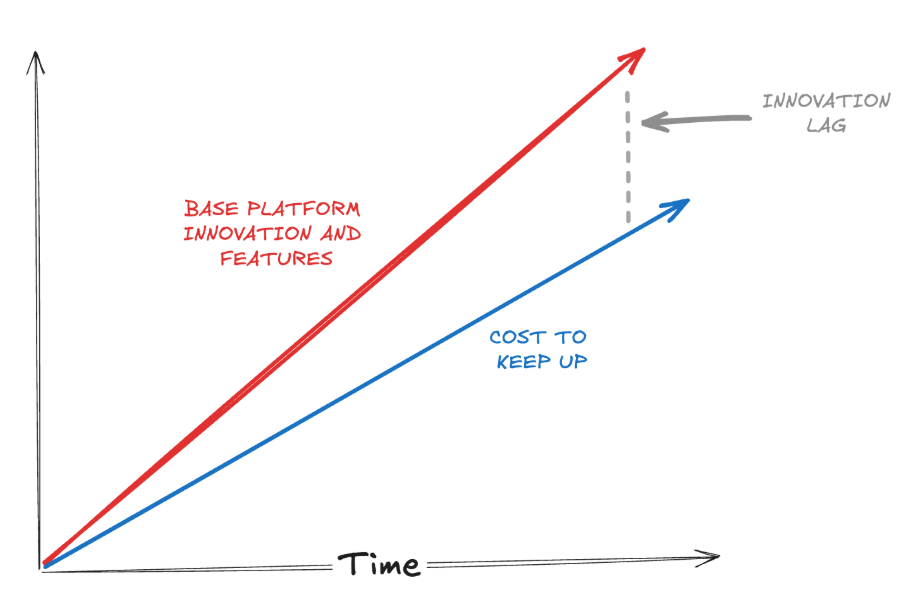

The Pros? It’s safe, cost-effective, and gives you immediate control. The Cons? You’re often hit with "Innovation Lag".

As base platforms (like OpenAI or Anthropic) innovate, the cost for you to keep up with their new features while maintaining your custom wrapper starts to climb. Eventually, you reach a "Sink or Swim" moment where the maintenance cost of your wrapper outweighs the innovation it provides.

Building for Your Specific Context

To avoid the lag, we suggest shifting focus toward Internal AI Platforms that address friction points specific to your business context. Instead of just wrapping a model, ask yourself:

What friction points are we actually trying to address?

How can we make internal knowledge more accessible?

What specific capabilities (NLP, Computer Vision, Robotics) does our business strategy actually require?

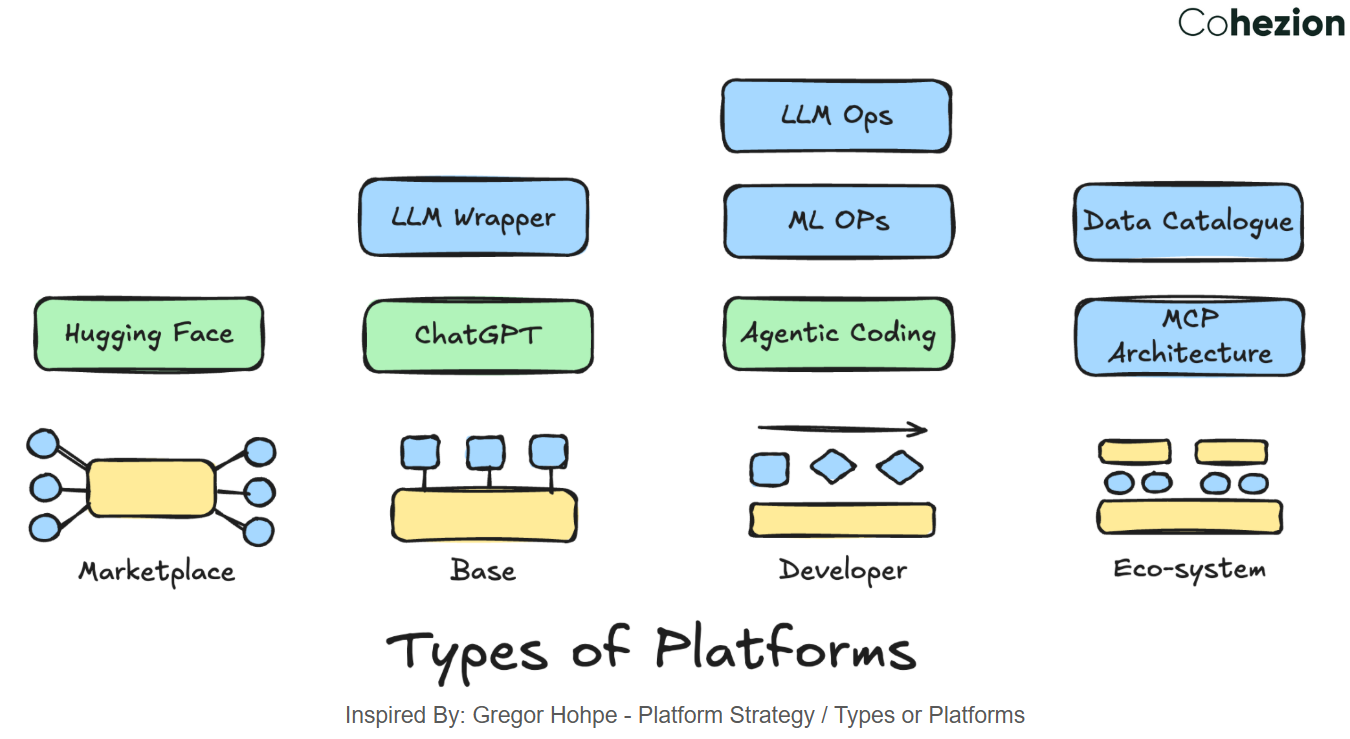

AI Platform Types

ACED Your Strategy

A strategy that doesn't make decisions is just a list of wishes. To make your AI platform strategy meaningful, we use the ACED framework (inspired by Gregor Hohpe):

Alignment: Your IT strategy must align with your broader business goals.

Clarity: It has to be easily understood by a broad audience, not just the "engine room".

Evolution: Strategies aren't set in stone; they must evolve as constraints are removed.

Decisions: A real strategy makes the hard calls on what not to do.

Technical Choices: RAG vs. Fine-Tuning

One of the biggest decisions you'll face is how to ground AI in your company’s data.

RAG (Retrieval Augmented Generation) is great for up-to-date information and large datasets, but it can hit performance and processing cost hurdles.

Fine-Tuning offers deeper domain knowledge and is often faster than RAG, but it comes with significant compute costs (GPUs) and the risk of "Catastrophic Forgetting".

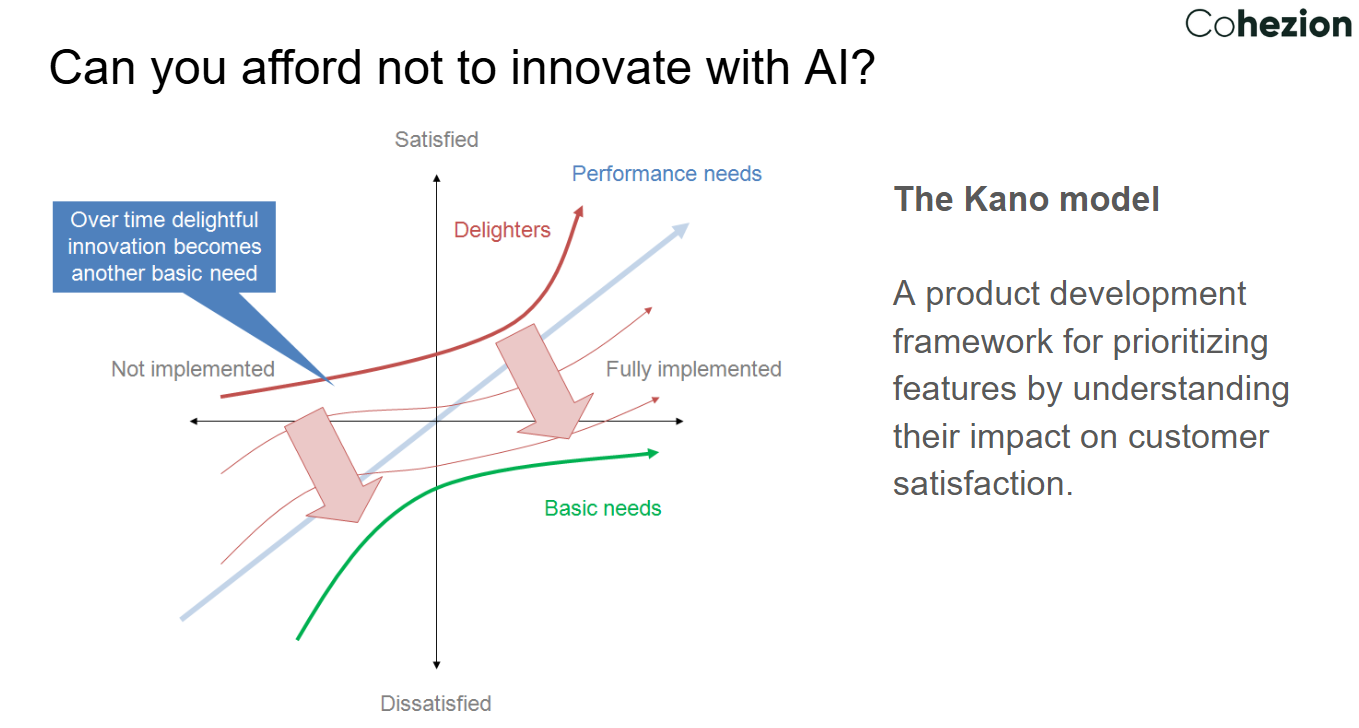

The Kano Model: From "Delighters" to "Basic Needs"

Kano Model for Product Development

In product development, we often use the Kano Model to prioritize features. In the world of AI, a feature that feels like a "Delighter" today (like a semantic data assistant that turns text into SQL) will quickly become a "Basic Need" tomorrow. The question isn't just "should we innovate?" but "can we afford not to?"

How Cohezion Can Help

At Cohezion, we specialize in bridge-building—connecting your organization's high-level strategy to the technical "engine room". Whether you need help building a Capability Map, deciding between RAG and Fine-Tuning, or establishing LLM Ops to monitor and validate your models, we’re here to help you navigate the complexity.

If you need help with your AI strategy or delivery and you're looking for practical, evidence-based guidance, get in touch with us!